AI is quickly working its way into the foundation of daily practice in patent prosecution and portfolio management. As adoption accelerates, AI regulation for patent lawyers is becoming an increasingly important issue for law firms and in-house counsel deploying AI tools across their workflows.

Data from analytics company Clarivate shows that AI adoption in IP workflows increased from 57% in 2023 up to 85% in 2025. Many patent attorneys report that their clients, both new and existing, have moved from skepticism about AI solutions to insisting that their in-house and outside counsel move swiftly to adopt AI solutions.

But how does this massive shift toward incorporating AI into patent practice mesh with the rapidly evolving framework of AI regulation? The significant consequences that stem from the provision of legal services draws a great deal of attention from enforcement agencies and lawmakers. Since the European Union set the direction of AI regulation in March 2024 by passing the AI Act, several states in the US have enacted AI regulations that might give patent practitioners pause when considering how to properly add AI tools to their arsenal.

Dispelling much of that confusion is Jacob Canter, Counsel at Crowell & Moring and a member of Crowell’s AI Steering Committee responsible for vetting AI solutions incorporated across their firm, including their IP practice.

In recent months, Canter has written about shifting legal liability for AI chatbot use in California and discussed changes in federal and state-level AI policy in Crowell webinars. “Generally these laws have been targeting data governance and consumer rights, or outcomes in significant contexts like employment,” Canter said, although he noted that discussions around product liability standards have begun to percolate.

Canter recently spoke with DeepIP to walk us through several AI regulatory considerations important to patent practitioners, including which states are developing the strictest regulations and the potential impact of federal policy harmonizing state laws. These insights can help ensure your patent practice complies with quickly developing regulatory frameworks across the entire federal jurisdiction that US patent law encompasses.

Why AI Regulation Matters for Patent Law Firms

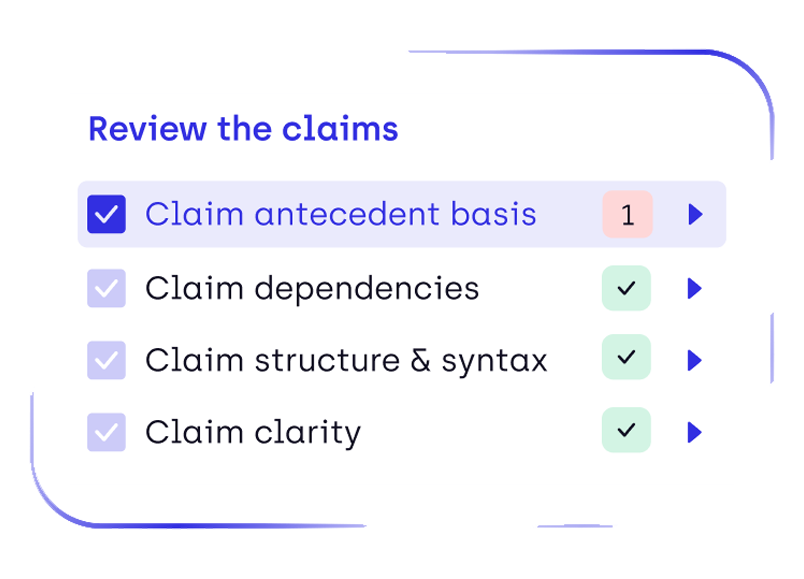

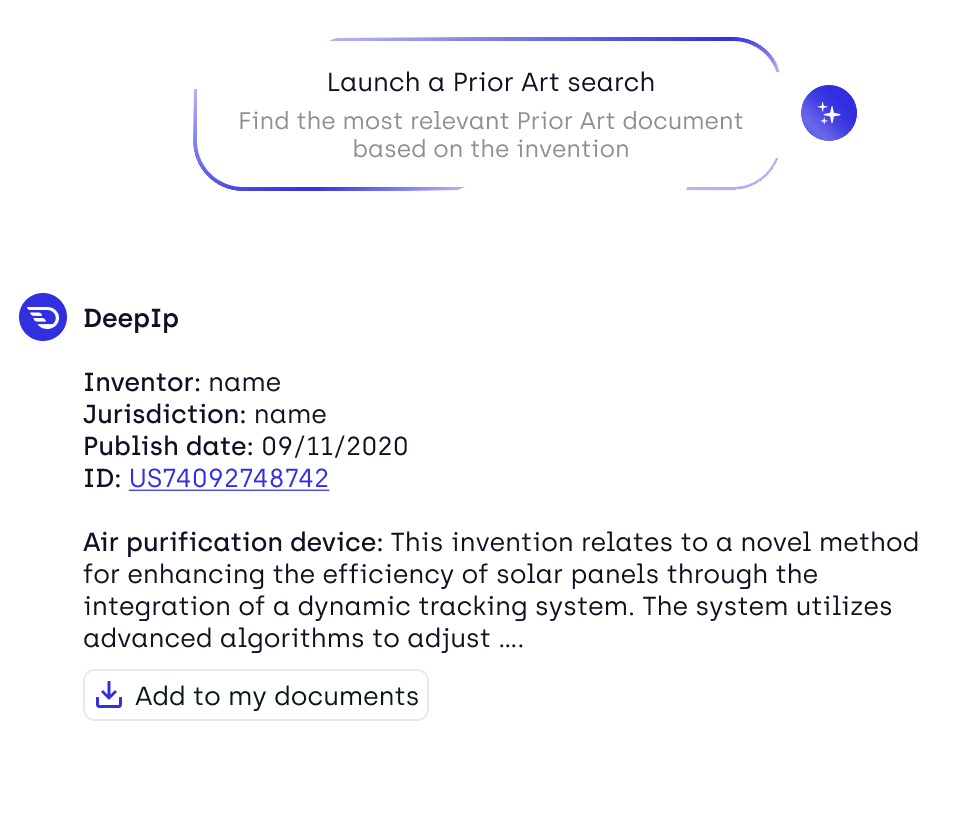

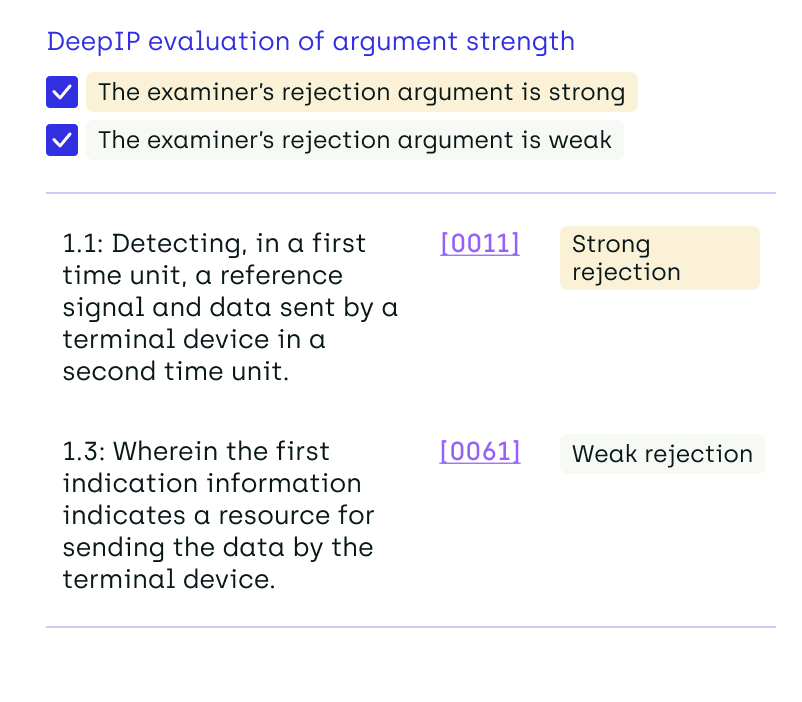

Patent lawyers increasingly rely on AI tools for tasks such as prior art search, patent drafting, office action analysis, and portfolio management. These tools can deliver substantial efficiency gains, but they also introduce new regulatory considerations around data governance, transparency, and accountability.

As government bodies develop AI regulatory frameworks, legal professionals must ensure that their use of generative AI systems complies with evolving rules on data protection, algorithmic transparency, and professional responsibility. For patent practitioners, this issue is particularly important because patent prosecution, portfolio management, and legal strategy involve decisions that affect valuable intellectual property rights.

Understanding how AI regulation intersects with patent practice is therefore becoming an essential competency for modern IP teams.

AI Regulation in California: Why Patent Law Firms Should Pay Attention

In 2024 alone, California passed 18 laws related to AI governance, and the largest US state continues to pave the way toward a regulatory framework for AI. In our discussion with Canter, he identified several bills and other state developments that could impact various aspects of a legal practice’s daily business:

Transparency in Frontier Artificial Intelligence Act (TFAIA)

Designed to provide oversight of large revenue companies employing incredibly powerful “frontier models,” the TFAIA underscores the tension between explosive AI growth and slower legislative cycles. “Talking to people with technical knowledge, there’s a belief that most AI models will have enough computational power to be considered frontier models sooner rather than later, perhaps even within the next few years,” Canter said.

CCPA Automated Decision-Making Technology Regulations

In September 2025, the California Consumer Privacy Agency (CCPA) approved new rules on automated decisionmaking technologies (ADMT) used by businesses on significant decisions. Patent law firms using AI tools to automate significant decisions in portfolio management, prosecution, and other workflows could be required to provide notice to impacted clients before use and provide alternatives for opting out.

Assembly Bill 2013

Effective January 1st, 2026, AB 2013 requires companies developing generative AI solutions to publicly document the training data behind those models. Patent firms developing in-house AI solutions could find themselves having to track and disclose training data, though enforcement mechanisms under AB 2013 are vague according to a Crowell client alert on the bill.

Other US States Expanding AI Regulation

California might be home to the greatest number of state-level regulatory efforts on AI use impacting firms in the patent industry, but significant developments are also being seen in other states.

- In September 2024, Texas Attorney General Ken Paxton obtained the nation’s first settlement over false statements regarding the accuracy of AI use in a professional services sector.

- In New York, state senators passed the Responsible AI Safety and Education (RAISE) Act, which follows California’s definition of “frontier model” while increasing reporting requirements and civil penalties for violators.

- State lawmakers in Colorado passed a law in 2024 requiring certain businesses that deploy high-risk AI systems to make their IT systems available for inspection by regulators.

- In July 2025, Massachusetts Attorney General Andrea Joy Campbell secured a $2.5 million settlement from a private sector company due to algorithmic discrimination marginalizing certain consumer groups.

EU AI Act vs US Policy: What It Means for Patent Lawyers

States passing legislation to regulate uses of AI within their borders have tended to borrow the regulatory structure established by the European Union’s landmark AI Act. This law, enacted in 2024, created the structure of risk categorizations followed by US state legislators drafting their own regulatory bills. Annexes to the EU’s AI Act define high-risk AI systems as those applying law to concrete sets of facts, which could encompass AI tools used as patent portfolio management or patent drafting solutions.

However, Canter noted that the US federal government was plotting a much different direction than the EU during the Trump administration. An executive order issued by President Trump days after taking office in January 2025 calling for the removal of regulatory barriers preventing American dominance in AI and the development of an action plan on AI competitiveness.

The US federal government’s focus on promoting American innovation in artificial intelligence is taking a much different approach than state-level frameworks. Canter highlighted several actions taken within the executive branch that provide some guidance to patent firms implementing AI tools:

- A December 2025 executive order calling for a single national framework for AI regulation that is “minimally burdensome” on AI adopters.

- A July 2025 executive order on promoting AI exports that seeks to accelerate AI deployment and remove regulatory measures impeding American competitiveness.

Other branches of the US federal government have been more cautious in addressing how AI is deployed by businesses, especially those in the legal industry. A total of 59 standing orders on the use of generative AI in patent infringement matters are currently being enforced in US district courts, according to Law360’s AI standing order tracker.

Over in the US Senate, federal lawmakers are debating the AI LEAD Act, which as of this writing is awaiting further markup at the Senate Judiciary Committee. If passed as currently drafted, the AI LEAD Act would introduce product liability standards into the AI context and even make businesses deploying AI systems fully liable if US courts can’t exercise jurisdiction over the system’s developer.

Practical AI Compliance Strategies for Patent Law Firms, According to Crowell & Moring’s Jacob Canter

AI solutions provide incredible value to patent practitioners because they can quickly handle mentally taxing tasks of drafting patent claims or legal arguments responsive to office actions. Other AI-powered tools provide reasonable, concrete assessments of asset value within larger portfolios, revealing insights that inform the work of licensing and portfolio management teams regardless if an attorney is working in-house or serving as outside counsel.

However, given the significant consequences that stem from the provision of legal services, it’s reasonable to take proactive steps ensuring your adoption of AI tools complies with developing regulatory standards.

Canter, who regularly interfaces with these regulatory issues from a legal professional’s perspective, offers a roadmap of practical steps patent firms can take when performing due diligence on potential AI vendors:

1. Audit How the AI Tool Utilizes Client and Portfolio Data

Concerns over a vendor’s use of client information are constantly discussed among members of Crowell’s AI Steering Committee.

“The first, second, and third issues I discuss with AI vendors are protecting client data,” he said, adding that many firms focus on AI’s productivity gains to the detriment of this critical ethical consideration.

Review all vendor use agreements closely to ensure that your solution providers are clearly using industry standard security measures. Also, be wary of storage and deletion protocols that provide opportunities for unintended breaches of confidentiality, which can put entire patent families at risk if those disclosures trigger time bars under statutes governing novelty of patented inventions.

2. Pick Solutions Compliant With the Strictest Regulatory Frameworks

While there are some diverging views, Canter believes that California is developing the most robust regulatory framework for AI. Patent firms, which practice under federal jurisdiction and often work with clients in many states, especially for patent prosecution, should continue to review legislative developments and enforcement efforts in California, New York, and other states actively pursuing AI regulation.

“For a small or mid-sized business that wants to be selling nationwide, it’s more practical to meet the strictest requirements rather than trying to comply with individual state laws,” Canter said. AI laws targeting high-risk business applications or significant decisionmaking that impacts legal rights are the most likely to impact patent practice of currently enacted state-level AI regulations.

For AI solutions that directly impact major patent portfolio decisions, whether for patent grants currently in force or applications still under prosecution at the agency, ensure you are working with AI vendors that meet the most stringent disclosure and transparency requirements with which you may have to be compliant.

3. Clearly Communicate Compliance Across Technical Barriers

While patent attorneys are a tech savvy bunch, addressing AI learning curves early ensures compliance as you adopt solutions into your patent drafting, prosecution, and portfolio management workflows.

Canter notes that difficulties in communicating best practices for particular AI tools often present themselves along generational lines: “There are senior lawyers using the tools really well and younger lawyers who haven’t been trained on the tools, but the newer generation that grew up with these technologies are much more comfortable with AI.”

Once an AI solution has been vetted and approved for use, take the conversations you’ve had with your vendor about data governance and safeguards and make sure every practitioner interfacing with the solution grasps those technical details.

Conclusion

In short, AI regulation for patent lawyers is moving toward stricter transparency, accountability, and governance standards, making vendor selection and internal compliance frameworks increasingly important.

Maintaining compliance with developing regulatory standards is best accomplished through diligent research, which is a part of daily business in legal services. Regardless of practice setting, patent practitioners should spend adequate time during AI adoption efforts to parse developments in consumer safety and product liability standards being applied to the sector. Those who do will be best prepared to harness the continuing wave of AI innovation unlocking great productivity gains for patent practices across the world.

FAQ: AI Regulation for Patent Lawyers

What AI regulations affect patent lawyers in the United States?

Patent lawyers must comply with emerging AI regulations at both the state and federal levels. States such as California, New York, Colorado, and Massachusetts have introduced laws governing automated decision-making, generative AI transparency, and algorithmic accountability. Federal initiatives, including executive orders and proposed legislation like the AI LEAD Act, may further establish national frameworks affecting how law firms deploy AI tools.

Can patent lawyers use generative AI tools for patent drafting and prosecution?

Yes, patent lawyers can use generative AI tools for tasks such as patent drafting, prior art search, office action analysis, and portfolio management. However, law firms must ensure these tools comply with professional responsibility rules, protect client confidentiality, and meet emerging AI governance and transparency requirements. Many firms now implement internal AI policies to manage these risks.

How does the EU AI Act affect patent law firms?

The EU AI Act introduces a risk-based framework regulating artificial intelligence systems, including certain “high-risk” applications. AI tools used in legal analysis, patent drafting, or portfolio management could potentially fall within these categories. Patent law firms working with European clients or deploying AI systems in EU jurisdictions may need to comply with requirements related to documentation, transparency, and oversight.

What compliance risks should patent law firms consider when adopting AI tools?

Key compliance risks when adopting AI tools include:

- Client data confidentiality and security

- Transparency regarding AI training data

- Vendor compliance with emerging AI regulations

- Potential liability for AI-generated outputs

- Governance policies for responsible AI use

Conducting vendor due diligence and implementing internal AI governance frameworks can help mitigate these risks.

Why is California important for AI regulation affecting law firms?

California is currently one of the most active jurisdictions developing AI governance laws in the United States. Legislation addressing automated decision-making technologies, generative AI transparency, and algorithmic accountability has made the state a major influence on national regulatory discussions. Because many technology companies and AI developers operate there, California’s policies often shape AI compliance strategies for law firms nationwide.

Will AI regulation slow adoption of AI tools in patent practice?

Most experts expect AI adoption in patent practice to continue growing despite new regulations. AI tools already support tasks such as prior art search, patent drafting assistance, and portfolio analytics. Regulatory frameworks are more likely to encourage responsible adoption rather than prevent it, pushing law firms to select AI platforms that prioritize data governance, transparency, and regulatory compliance.

How should patent law firms evaluate AI vendors for compliance?

Patent law firms should evaluate AI vendors based on:

- Data protection and confidentiality safeguards

- Transparency around training data and model governance

- Compliance with evolving AI regulatory frameworks

- Security standards and auditability

- Clear policies for data storage and deletion

Selecting AI platforms that meet the strictest regulatory standards helps firms remain compliant across multiple jurisdictions.

.png)

.png)