Why AI Data Security Is Becoming a Strategic Priority for Patent Lawyers

Artificial intelligence is rapidly reshaping how intellectual property work is performed. Patent drafting, prior art analysis, invention review, and portfolio intelligence are increasingly supported by AI-powered tools designed to accelerate legal workflows.

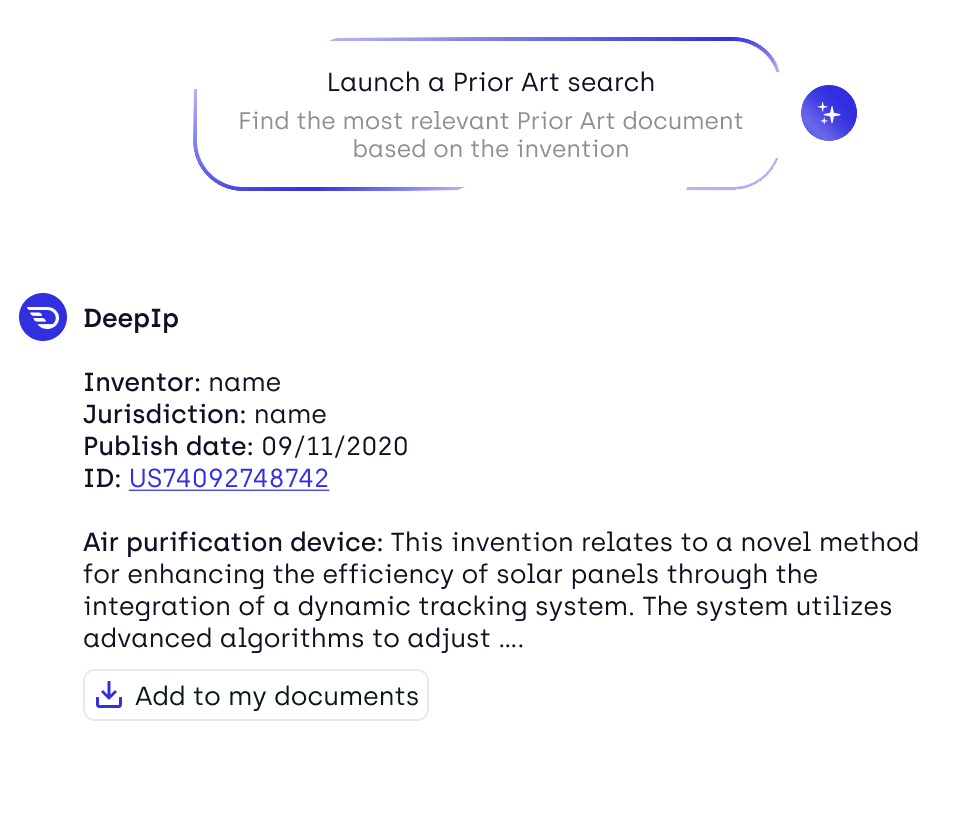

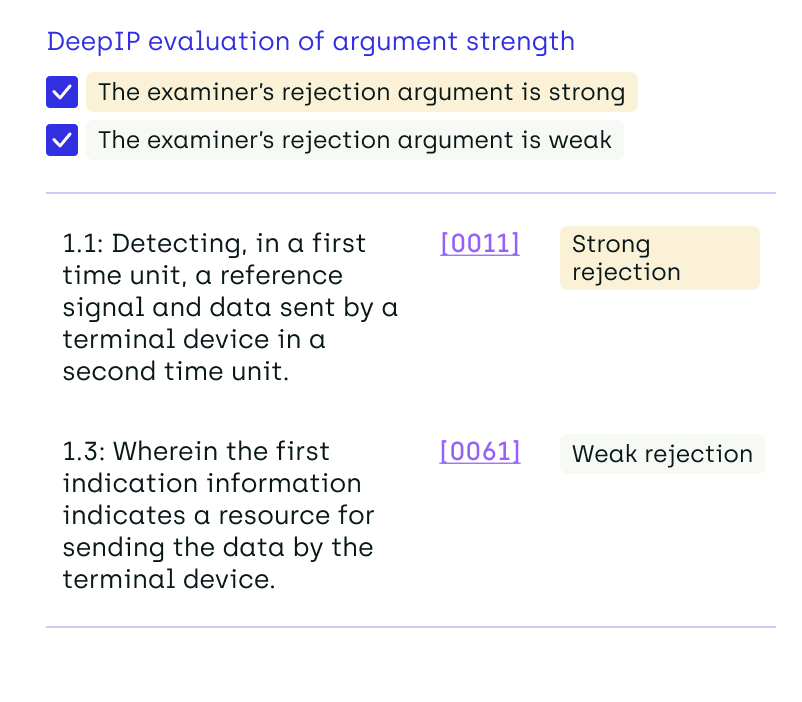

For patent practitioners and IP teams, the productivity gains are significant. AI systems can analyze thousands of documents in seconds, surface relevant prior art more efficiently, and assist with drafting complex patent specifications. AI tools can also accelerate prior art search and patent analysis at a scale that was previously impossible—but the tools carrying out this analysis must meet the same confidentiality standards as the rest of a firm's infrastructure.

But as adoption accelerates, a new question is emerging across the legal industry: how secure is AI when used with confidential legal data? This concern is not hypothetical—and it's why AI data security has become a defining issue for law firms handling sensitive client information.

Law firms handle some of the most sensitive commercial information in the global economy, including unpublished inventions, trade secrets, and corporate R&D strategies. When that information is processed through AI platforms, the stakes become extremely high.

At the same time, AI adoption across the legal profession is accelerating rapidly. Surveys of thousands of legal professionals show that AI usage among lawyers has risen sharply in recent years as firms experiment with generative AI for research, drafting, and document analysis. From patent prosecution to portfolio review, AI-powered platforms are being integrated across every stage of the IP workflow. The combination of rapid technological adoption and highly sensitive client data is creating a new operational challenge for law firms: AI data security.

For IP professionals in particular, the issue goes beyond cybersecurity. A data breach involving patent drafts or invention disclosures could expose confidential innovation and potentially undermine patent strategies.

As Bloomberg Law has noted, generative AI introduces not only efficiency gains but also new risks involving client confidentiality, privacy, and intellectual property protection.

The challenge for patent practitioners is therefore clear: how to leverage AI while maintaining strict confidentiality standards required by legal practice.

The New Cybersecurity Risks AI Introduces for IP Firms

The rise of generative AI in legal practice is creating new categories of security risk that traditional law firm infrastructure was not designed to handle.

One of the most widely discussed concerns involves confidential data leakage.

Many consumer AI tools operate through shared cloud environments, where prompts and outputs may be logged or used to improve underlying models. When lawyers input sensitive information—such as unpublished patent claims or invention disclosures—into such systems, that information could potentially be retained or exposed.

For patent practitioners, even the perception of disclosure can create problems. Patent law relies heavily on novelty and confidentiality. If details of an invention become public prematurely, it could affect patentability or create strategic disadvantages.

Another emerging threat is the growing sophistication of cyberattacks targeting the legal sector.

Law firms represent attractive targets because they hold large volumes of commercially valuable information. Patent filings, licensing strategies, pharmaceutical research, and emerging technology disclosures all represent high-value data for competitors or malicious actors.

Recent industry analysis shows that the legal sector is increasingly confronting cyber risks associated with AI adoption, including new attack methods such as LLMjacking, in which attackers attempt to hijack AI systems running in cloud environments.

These developments mean that the security architecture of AI tools used in legal practice is no longer a technical detail—it is a strategic requirement.

Why AI Governance Is Now a Leadership Issue in IP Practice

As these risks become clearer, many law firms are beginning to treat AI governance as a leadership responsibility rather than a purely technical issue.

Senior partners, innovation teams, and heads of IP are increasingly involved in evaluating how AI tools are deployed inside the firm.

This shift reflects a broader change in how legal organizations think about technology adoption. Instead of simply asking whether AI can improve productivity, firms are asking more complex questions:

- Where can AI safely be used within legal workflows?

- Which tools meet the confidentiality standards required for client work?

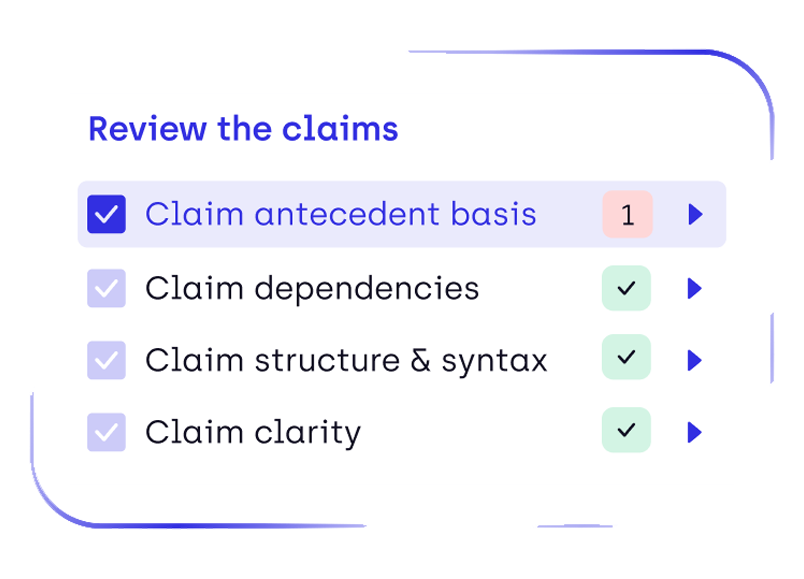

- How should lawyers review and validate AI outputs?

These governance questions are particularly important in intellectual property work, where the confidentiality of technical information is central to the value of the legal service itself.

Forward-thinking firms are therefore beginning to establish internal AI usage policies that address issues such as data handling, tool approval, and review procedures.

Such governance frameworks help ensure that lawyers can experiment with AI tools while maintaining the ethical obligations of legal practice.

How Secure Legal AI Platforms Protect Patent Data

The emergence of specialized legal AI platforms reflects the growing need for secure AI tools designed specifically for confidential legal workflows.

Unlike general-purpose AI tools, legal AI platforms must address three critical security concerns:

- Data encryption to protect confidential documents

- Controlled access to ensure only authorized professionals interact with sensitive information

- Data governance policies preventing confidential inputs from being retained or reused

DeepIP was designed with these requirements in mind.

The platform incorporates enterprise security standards that align with recognized cybersecurity frameworks, including NIST 800-53 Moderate and the NIST Cybersecurity Framework, both widely used in risk management across regulated industries.

Encryption safeguards confidential patent data throughout the workflow. Information is protected both in transit through TLS protocols and at rest through AES-256 encryption, ensuring that sensitive documents remain secure at every stage of processing.

Equally important is the platform's zero-retention approach to AI processing. One of the major concerns raised by patent professionals is whether confidential prompts could be incorporated into future training datasets.

DeepIP addresses this risk by ensuring that user data is not retained beyond what is required to process the request. External AI vendors do not have access to confidential patent drafts, and client data never becomes part of publicly accessible models.

The platform also aligns with widely recognized compliance standards including SOC 2 Type II, ISO 27001, ISO 42001, HIPAA, and GDPR, providing assurance that data protection practices meet international regulatory requirements.

For IP firms managing sensitive innovation data across jurisdictions, these safeguards are critical.

How DeepIP Ensures AI Data Security for Patent Professionals

For IP teams evaluating AI tools for patent drafting and analysis, security architecture is one of the most important criteria. Patent professionals routinely work with unpublished inventions, confidential R&D disclosures, and proprietary technical information. Any AI platform used in these workflows must therefore meet the same confidentiality standards expected of legal infrastructure.

DeepIP was designed specifically for secure AI workflows in intellectual property practice, combining generative AI capabilities with enterprise-grade data governance.

The platform incorporates several layers of protection intended to safeguard sensitive patent data throughout the AI workflow.

Security Frameworks Aligned with Enterprise Standards

DeepIP’s security model follows widely recognized cybersecurity frameworks used in regulated industries.

The platform aligns with:

- NIST 800-53 Moderate controls, a security standard commonly used in federal and enterprise systems

- The NIST Cybersecurity Framework (CSF), which provides structured guidance for risk management and continuous monitoring

These frameworks guide how the platform manages access control, vulnerability management, and infrastructure security. DeepIP also conducts regular vulnerability assessments and penetration testing to identify and address potential weaknesses.

For IP firms managing confidential invention disclosures and patent drafts, adherence to established cybersecurity standards is critical.

End-to-End Encryption for Confidential Patent Data

Protecting sensitive legal data requires strong encryption across the entire lifecycle of the information.

DeepIP secures patent data using:

- TLS 1.2+ encryption for data in transit, preventing interception while information moves between systems

- AES-256 encryption for data at rest, ensuring stored patent drafts and AI-generated outputs remain protected

These encryption protocols are widely used across enterprise infrastructure and financial systems because of their robustness.

For patent practitioners, this means that confidential documents remain protected whether they are being uploaded, processed by AI models, or stored within the platform.

Zero-Retention Architecture for Generative AI Workflows

One of the most common concerns raised by law firms evaluating generative AI tools is data retention.

Some consumer AI systems may store prompts or incorporate them into training datasets. For legal professionals handling confidential client information, this creates potential confidentiality risks.

DeepIP addresses this concern through a zero-retention architecture designed specifically for legal workflows.

Key principles of this approach include:

- Private, dedicated infrastructure environments

- User prompts and outputs not retained beyond what is required to process requests

- No external AI provider access to confidential patent drafts or client data

This ensures that confidential legal information submitted through the platform never becomes part of publicly accessible training data.

Compliance with International Security and Privacy Standards

DeepIP also aligns its infrastructure with widely recognized compliance certifications, including:

- SOC 2 Type II, validating secure data handling and operational controls

- ISO 27001 & ISO 42001, the global standard for information security management systems

- GDPR, ensuring compliance with European data protection regulations

- HIPAA, reflecting infrastructure capable of supporting highly sensitive regulated data

For global IP firms operating across jurisdictions, these certifications provide assurance that the platform meets stringent international data protection requirements.

Security as a Foundation for AI Adoption in IP Practice

As AI becomes embedded in patent drafting, prior art analysis, and portfolio intelligence workflows, security will remain one of the most important factors in technology adoption.

IP professionals must be able to trust that AI systems protect confidential innovation data with the same rigor applied to traditional legal infrastructure.

DeepIP’s security-first architecture reflects this principle: AI tools for patent professionals must combine advanced automation with strict confidentiality safeguards.

AI Is Becoming a Tool for Strengthening Cybersecurity

While much of the discussion focuses on AI’s risks, the technology can also improve security.

AI-driven monitoring systems can analyze network activity and identify anomalies that might indicate attempted breaches. Because these systems process large volumes of signals in real time, they can detect suspicious behavior earlier than traditional rule-based cybersecurity tools.

AI is also increasingly used to automate compliance monitoring.

Systems can track how sensitive information is handled, flag policy violations, and generate audit trails required for regulatory reporting. For global law firms managing cross-border client data, these capabilities can significantly reduce operational risk.

In other words, AI is not only a potential vulnerability. When deployed properly, it can become part of the solution.

The Future of AI in IP Practice Depends on Trust

The adoption of AI across the legal profession is accelerating. Many firms are already integrating AI into legal research, document analysis, and drafting workflows in order to remain competitive and meet client expectations for efficiency.

But for intellectual property professionals, the success of AI adoption ultimately depends on trust.

Patent lawyers must be confident that AI systems protect the confidentiality of client innovations and comply with the strict ethical obligations governing legal practice.

Secure AI platforms designed specifically for legal workflows allow firms to capture the benefits of automation while maintaining control over sensitive information.

For IP teams navigating this transition, the objective is clear: embrace AI innovation without compromising data security.

FAQ: AI Data Security for Patent Lawyers and Law Firms

Is generative AI safe for patent drafting?

Generative AI can assist with patent drafting, but using public AI tools without security safeguards may expose confidential information. Many IP professionals prefer specialized legal AI platforms that provide encryption and strict data governance.

Can AI tools leak confidential legal data?

Yes. If an AI system stores prompts or uses them for training, confidential legal information could potentially be exposed. This is why law firms increasingly adopt AI tools with zero-retention policies.

What security standards should legal AI platforms meet?

Enterprise legal AI tools should ideally comply with frameworks such as SOC 2 Type II, ISO 27001, GDPR, and NIST cybersecurity standards to ensure robust data protection.

Why are law firms targeted by cyberattacks?

Law firms possess valuable data including intellectual property, corporate strategies, and proprietary research. This makes them attractive targets for cybercriminals and corporate espionage.

How can AI improve cybersecurity for law firms?

AI can monitor network activity, detect unusual behavior, automate compliance reporting, and identify potential threats before they escalate into breaches.

.png)

.png)