AI is reshaping what's possible in patent practice, and the practitioners who get the most out of it are the ones who engage with it critically. The promise is tempting: give AI an initial direction, then the practitioner can move on to other work, allowing a predictive language model to carry the work forward largely unchecked.

In practice, however, that hands-off approach rarely delivers. Outputs that look robust on the surface can be subtly disappointing under critical review: novelty blurs into the obvious, arguments drift, and assertive rhetoric lacks substantive foundation.

Ultimately, however, the problem is not with the tools, but how we, as practitioners, think about, approach, and deploy the tools. AI’s output mimicking intelligent output (and marketing emphasizing intelligence while glossing over the key qualifier of “artificial”), is often mistaken for real intelligence (RI). Unlike RI, though, AI is fundamentally backward-looking.

Understanding this core limitation of AI tools in patent practice also reveals some of AI's most impactful applications—including red-teaming workflows that surface examiner objections early, sharpen claim strategy, and preserve optionality before the record is locked.

The practitioners who avoid that hands-off trap are the ones unlocking AI's real productivity gains in patent practice. Done right, AI dramatically expands what a skilled practitioner can accomplish—faster prior art search, sharper claim framing, and more rigorous prosecution records than any manual workflow could produce alone.

The Risk of Hands-Off AI

Because AI is fundamentally backward-looking, it requires the most careful human oversight, precisely where patent prosecution demands the most care: identifying, preserving, and highlighting what is novel. AI outputs familiar (i.e., obvious) patterns: the longer an AI model runs, the more it will continue producing plausible text—while quietly drifting away from what is actually novel and inventive.

This underscores the risk of delegated novelty—treating novelty as something established once and then safely handed off to AI to elaborate. Although it sounds efficient to define the inventive narrative at the outset and allow AI to elaborate from there, novelty is not something that can be established once and then left unattended. It must be continuously maintained against developing claim scope, vetted against legal standards, and maintained in changing context. Delegated novelty failure shows up whenever the practitioner inputs initial direction into AI models and then “checks out.” The model continues generating fluent text increasingly unmoored from what actually meets the legal tests.

Closely related is predictive drift—the tendency of a predictive model to slide from invention-specific substance toward familiar and conventional. Because AI is trained on prior language, it inherently gravitates towards replacing new technical substance with familiar phrasing, unless a human actively intervenes. The draft improves stylistically while degrading substantively, creating the illusion of progress as inventive distinction is lost.

A third breakdown is late discovery of risk. Without meaningful review of AI-generated drafts, vulnerabilities often surface only after office action issues. By then, the record is locked, and options that could have been preserved through earlier drafting choices are gone.

Finally, there is unchecked sycophancy. By design, AI is agreeable: it embellishes, rationalizes, and smooths over uncertainty. In prosecution, where the cost of undiscovered weakness is high and the written record is unforgiving, that tendency requires active correction.

The common thread across all four failure modes is not poor AI output—algorithms continue working exactly as humans built them—but human disengagement at precisely the point where novelty and legal framing require the most active oversight.

Introducing the Red Team Framework

The remedy to these failure modes is to correct our thinking of how to use AI tools. Skilled human practitioners must personally and continuously own the legal frameworks governing the application—novelty, obviousness, eligibility, disclosure, and enablement—rather than treating them as one-time inputs. Patent drafting must continually be tied to what actually makes the invention patentable.

However, this is also where AI tools can be most helpful: the same predictive bias that limits AI on drafting autopilot also makes AI particularly effective at applying existing knowledge to reproduce familiar linguistic and structural patterns of rejection. The relevant question is not whether to use AI, but how workflows should be structured so that human practitioners retain ownership of patentability while AI tools amplify the output possible through human experience and real intelligence.

In particular, prosecution workflows can improve significantly in quality and time when AI is employed in early and iterative ‘red team’ exercises.

Used deliberately, AI can surface predictable objections long before an examiner, repurposing the very weakness of AI to help human practitioners sharpen and police—throughout the drafting process—the novelty that AI unchecked discards.

This reframing helps efficiently bring eligibility, enablement, and technical-effect considerations from being post-hoc arguments developed after problems arise, to being recurring tests applied throughout drafting and response. Although prosecution rejections and validity challenges will never be entirely eliminated, AI red teaming can efficiently and effectively expose them when wording and strategy can be easily shifted, increasing strength and value and preserving optionality. In other words, workflows should be purpose-designed so that AI red teams pressure-test substance against known rejection patterns, while humans maintain and direct the legal strategy and framing.

AI Role Prompting

This red team framing works because it recognizes AI’s strengths and weaknesses. Invention drafting is where AI requires the most human direction—novelty by definition lacks strong linguistic precedent, so AI fills gaps by analogy rather than distinction.

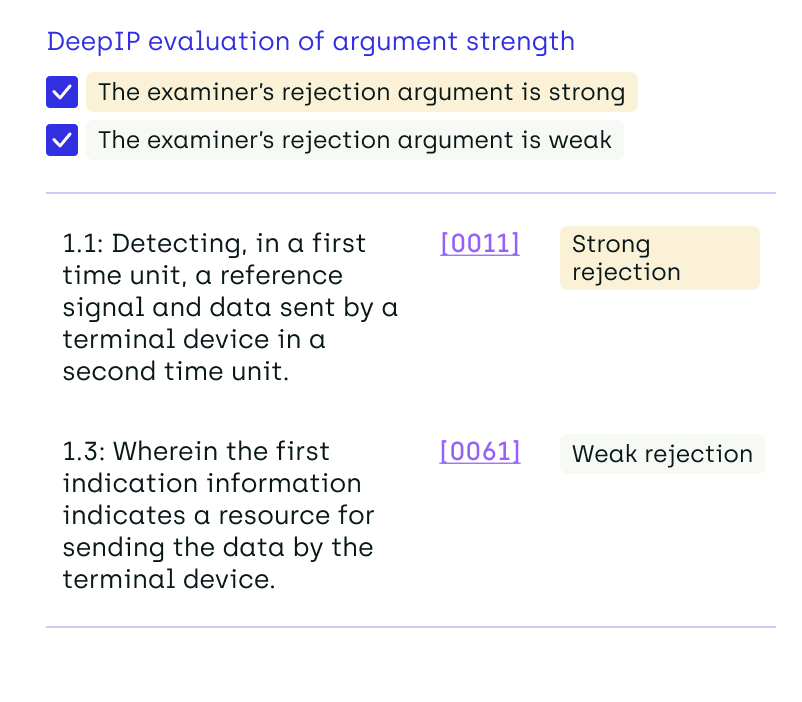

That same statistical bias makes AI algorithms extremely effective at generating rejection logic. AI red team agents can be instructed to generate rejections—under §101, §112, §102/103 and related doctrines—all analyses that follow common language and logic patterns that AI produces reliably and with rapidity.

What is not immediately obvious is that AI’s accommodating generative patterns make role-morphing important in red team agents. Beginning in an explicitly skeptical role counteracts AI’s natural tendency toward agreement, explicitly prompting output attacking the draft: identify where claims appear abstract (e.g., §101 exposure), where disclosure seems thin (e.g., §112 exposure), or where asserted “technical effects” are not actually supported by the description. The purpose is not to decide definitively what fails, but to surface common arguments that are statistically likely to be applied based on the draft as it is currently written.

However, leaving AI permanently in a skeptical role is also unproductive. Over a conversation, it can drift into ‘reverse sycophancy’: instead of agreeing with everything, it continues accommodating your defined behavior by saying “no” to everything. This actually suppresses clues from the context—ignoring hints already present in the draft that could be amplified to obviate challenges the agent raised.

AI’s fuller value emerges when the agent’s role is deliberately shifted within the same context—from skeptical to helpful.

As an example, a prompt might look like:

Act as a skeptical USPTO examiner.

[STEP 1] Generate 5 grounds of rejection for unsupported limitations of these claims.

[STEP 2] Then, switch roles to a cautiously rigorous but helpful retired examiner, and identify in the specification a list of hints (quote paragraph numbers) that could be developed to better support the unsupported limitations.”

Having surfaced likely objections, the agent is explicitly prompted to ‘change roles’ and identify signals already present in the draft that could be strengthened and hardened to better satisfy the legal tests. In practice, this means surfacing constructive hints that can be amplified into support for the legal tests of patentability (e.g., eligibility, enablement, technical-effect): what technical detail should be expanded, what claim framing should be tightened, and what factual statements could be made explicit to anchor the record. Used this way, AI rapidly generates not only likely objections but transforms them into a map of where human judgment can be most valuably focused.

How to Effectively Harness the Power of Patent AI

Four key practical lessons come to the front:

- Definition and maintenance of novelty is a responsibility that should remain human-owned throughout prosecution.

- AI is valuable as a rejection simulator in red teaming exercises before and during application drafting, surfacing predictable but possibly unexpected weaknesses early, while there is still flexibility.

- Beginning with a skeptical role and then deliberately shifting to a constructive role amplifies the strengths of AI by avoiding positive or negative sycophantic accommodation patterns.

- Success is measured not by smoother, faster drafts, but by reduced post-filing surprises and cleaner prosecution records.

Used in this way, AI becomes a powerful tool that amplifies the productivity of human experts. Far from replacing the judgment of real intelligence, AI increases its impact by efficiently revealing where our judgment matters most.

FAQ: Human-Led AI Workflows in Patent Practice

Is AI Ready to Be Trusted in Patent Prosecution Workflows?

AI is ready—but only when paired with active human oversight. The technology itself works as designed. The risk lies in treating AI as an autonomous drafter rather than a powerful tool that requires continuous direction from a skilled practitioner.

What Is "Delegated Novelty" and Why Does It Matter?

Delegated novelty is the mistake of defining what makes an invention patentable once at the outset, then handing off the drafting to AI without ongoing supervision. Because novelty must be continuously maintained against developing claim scope and evolving legal standards, checking out after that initial input is one of the most common—and costly—mistakes practitioners make with AI.

What Is Predictive Drift, and How Do I Avoid It?

Predictive drift is the tendency of AI to gradually replace invention-specific substance with familiar, conventional language as a draft progresses. The draft can actually improve stylistically while degrading substantively. The remedy is regular, critical human review throughout the drafting process—not just at the end.

What Is AI Red Teaming in Patent Practice?

AI red teaming means deliberately prompting an AI agent to simulate the objections a USPTO examiner would raise—under §101, §102/103, §112, and related doctrines—before those objections are ever filed. The goal is to surface predictable weaknesses early, when wording and strategy can still be adjusted, rather than discovering them after the record is locked.

How Does Role-Morphing Improve AI Red Teaming?

A permanently skeptical AI agent can drift into "reverse sycophancy"—reflexively rejecting everything and suppressing useful signals already present in the draft. By explicitly shifting the agent's role from skeptical examiner to constructive advisor within the same context, practitioners can surface both likely objections and the specification hints that could be developed to address them.

Does Using AI Red Teaming Mean the Application Will Never Face Rejection?

No. Prosecution rejections and validity challenges will never be entirely eliminated. What red teaming does is expose predictable weaknesses early—when they can be addressed—increasing the overall strength of the application and preserving strategic optionality before filing.

How Should Success with AI in Patent Practice Be Measured?

Not by faster or smoother drafts, but by reduced post-filing surprises and cleaner prosecution records. The real productivity gain from AI comes when human practitioners retain ownership of patentability while AI amplifies what their experience and judgment can accomplish.

.png)