AI Compliance in Patent Work: A Shift From Productivity to Responsibility

AI tools have spread quickly across patent work. Drafting, prior art search, office action responses—there is now a tool for each step, and in many teams they are already part of day-to-day work.

What has changed just as quickly is the level of caution around them.

The question is no longer whether AI can save time. It is whether it can be used without creating problems later—leaking confidential information, introducing subtle errors, or exposing firms to risk they did not intend to take on.

That is why most serious evaluations of AI tools now start in the same place:

- What happens to the data once it is entered?

- Is anything stored or reused?

- How reliable are the outputs in practice?

- Who is responsible if something slips through?

Those questions sit much closer to professional duty than to technology.

From AI Adoption to AI Governance in IP Practice

A year ago, many lawyers were testing AI tools on their own. That phase did not last long.

Today, AI sits inside procurement reviews, IT security checks, and client conversations. Some corporate IP teams already require outside counsel to confirm how AI is used before work even begins. Others ask for formal approval before certain tools can be used at all.

Internally, firms are starting to formalize this into policy. The details vary, but most frameworks cover the same ground:

- Which tools are approved

- How confidential data can be handled

- When clients need to be informed or consulted

- How usage is tracked

None of this is especially complicated. It just reflects the fact that AI is no longer experimental.

Codes of Conduct Are Evolving—But the Core Principles Are Not

Professional guidance is starting to catch up. The Institute of Professional Representatives before the European Patent Office (EPI) has published one of the more detailed sets of guidelines so far.

The substance is familiar. Confidentiality, accuracy, independent judgment—these are long-standing obligations.

What has changed is the way they can be breached.

A prompt can contain the full technical detail of an invention. An output can read well while quietly introducing errors. And in many cases, it is not obvious what the system is doing with the information it receives.

That combination makes routine tasks less straightforward than they used to be.

Where AI Creates Risk in Patent Workflows

How does AI create confidentiality risks in patent drafting?

The risk starts with the input.

Patent drafting often involves material that is both commercially sensitive and not yet protected. Feeding that into a system that stores or learns from inputs creates exposure that is difficult to control afterward.

In practice, the issues tend to fall into a few categories:

- Data being retained outside the firm’s systems

- Information reused in ways that are not visible to the user

- Uncertainty about how long inputs persist

For teams working on early-stage R&D, that is usually enough to slow adoption.

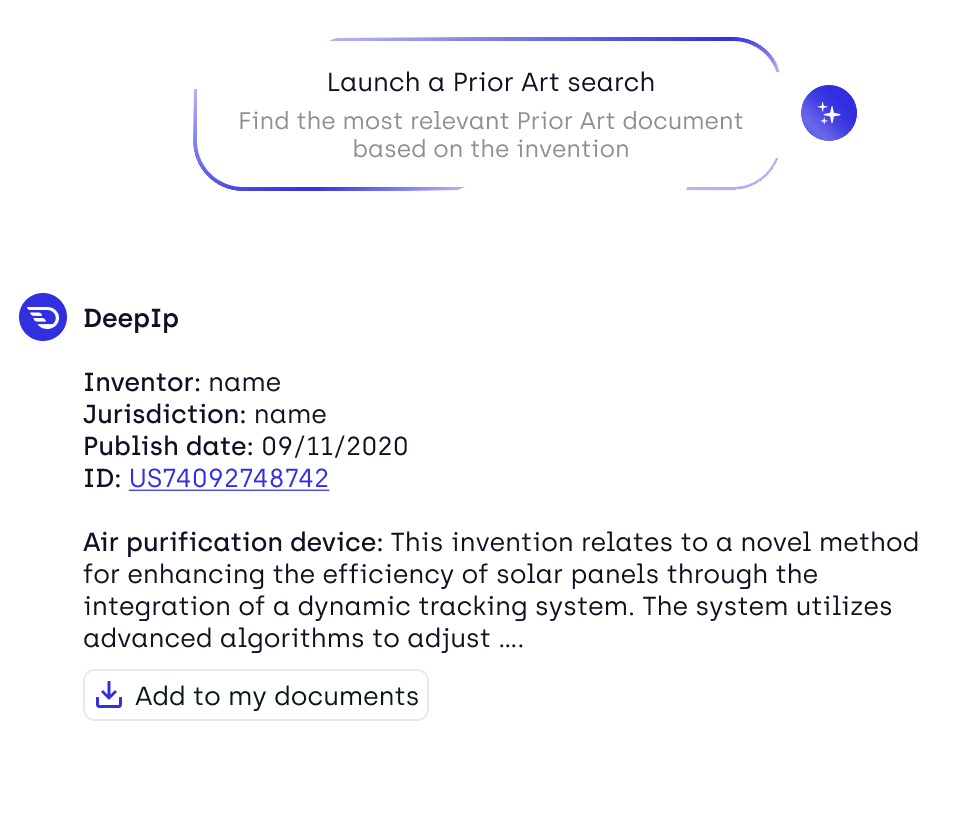

What are the risks of AI in prior art search and patentability analysis?

Prior art search is where AI has been most immediately useful. Results come faster, and in many cases they are easier to navigate. But faster results can create a different kind of problem. It becomes easier to accept what is returned as complete.

The gaps are not always obvious. A missed reference or a weak interpretation may only become visible later, when it is harder to correct. That is why many practitioners still treat AI-assisted search as a first pass rather than a final answer, even when using advanced AI patentability analysis platforms.

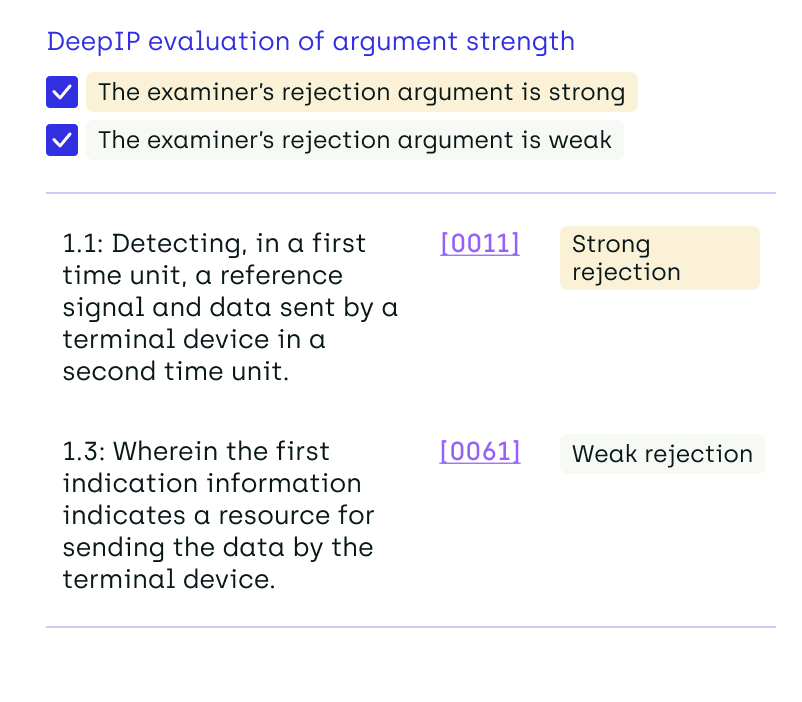

Can AI-generated office action responses introduce legal risk?

They can, and often in ways that are easy to overlook. AI is good at producing structured text. That makes it easier to generate a response that looks convincing on the surface. The issue is whether the reasoning holds up.

Typical failure points include:

- Citations that do not fully support the argument

- Reasoning that skips a step

- Language that sounds precise but is not

None of this is new in legal work. The difference is the speed at which it can now happen.

Turning Compliance Principles Into Operational Decisions

What does AI compliance require in day-to-day patent practice?

Most of the obligations are already familiar. The difference is how often they come into play.

A lot depends on understanding how a tool handles data. Some systems retain inputs. Others are designed not to. That distinction alone determines whether a tool can be used for certain types of work.

There is also a shift in how time is spent. Drafting may be faster, but review takes on more weight. The expectation is still the same: the final document needs to meet professional standards. In many cases, there is also a client dimension. Some organizations want explicit approval before AI tools are used. Others are less formal but still expect to be informed.

What Defines a Secure AI Patent Platform Today

What features define a secure AI patent tool?

There is now a fairly clear baseline.

At a minimum, firms tend to look for:

- No retention of user inputs

- No use of client data for training

- Encryption of data at rest and in transit

- Separation between users and clients

- Clarity on how the system processes information

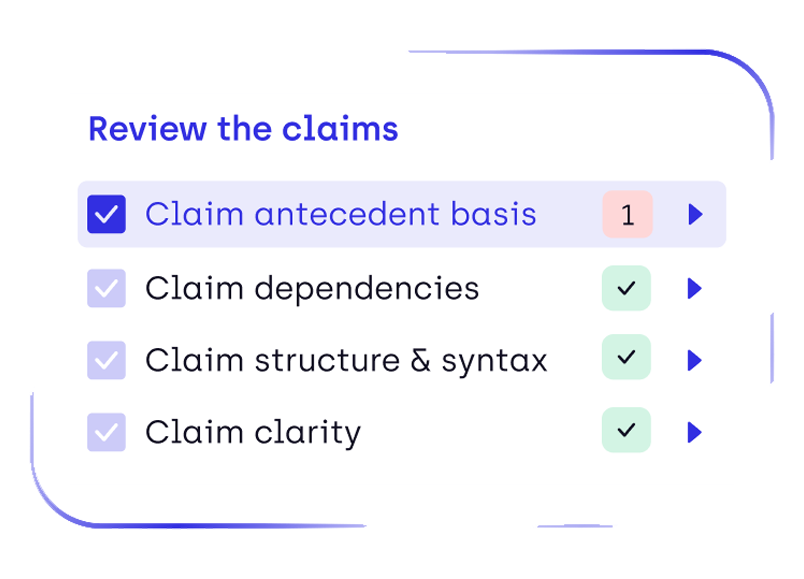

Beyond that, the more useful platforms tend to include checks—flagging missing elements or inconsistencies rather than simply producing text.

That combination is what most teams now expect from tools positioned as enterprise-grade AI patent software.

How DeepIP Aligns With AI Compliance Requirements

How does DeepIP ensure AI compliance and data security?

DeepIP's security protocol addresses these concerns directly in its design.

The platform runs on a zero-retention API. Inputs are not stored and are not used for training. That removes one of the main uncertainties practitioners have when working with generative AI.

On the security side, it follows established standards:

- AES-256 encryption for data at rest

- TLS 1.2+ encryption for data in transit

- alignment with ISO 27001, SOC 2 Type II, GDPR, HIPAA, and ISO 42001 for AI management systems

Data is also separated across users and organizations, which matters for firms handling multiple clients. The tools themselves are built to support review. They surface issues—missing formalities, inconsistencies, structural gaps—but do not replace the practitioner’s role in validating the work. That approach is broadly in line with how professional guidance is evolving. AI can assist, but responsibility stays with the person signing the document.

AI Governance as a Practical Advantage for IP Teams

The firms that are moving fastest with AI are not necessarily the ones using the most tools. They are the ones that have decided how those tools are used.

Clear rules, predictable workflows, and tools that meet basic security expectations remove a lot of hesitation. Work moves faster because fewer questions need to be asked each time. Without that structure, adoption tends to stall. Not because the tools are unusable, but because the risks are unclear. Over time, that gap is likely to widen.

FAQ: AI Compliance for Patent Professionals

What does AI compliance mean in patent practice?

It refers to how AI tools are used within existing professional obligations, particularly around confidentiality, accuracy, and accountability.

Is it safe to use AI for patent drafting?

It depends on how the system handles data and how outputs are reviewed. Tools that do not retain inputs are generally easier to use in sensitive contexts.

What are the main risks of AI in patent law?

The main concerns are data exposure, unreliable outputs, and limited visibility into how systems process information.

Do patent attorneys need client consent to use AI tools?

In some cases, yes. This depends on internal policies and client expectations.

How can firms evaluate AI patent tools from a compliance perspective?

By looking at data handling, security standards, transparency, and whether the tool supports proper review.

How does DeepIP address AI compliance concerns?

By avoiding data retention, applying established security standards, separating client data, and supporting practitioners in reviewing outputs.

.png)

.png)